Hi Sanjin, I recently faced the same problem with aggressive AI bots, the worst of all was ClaudeBot.

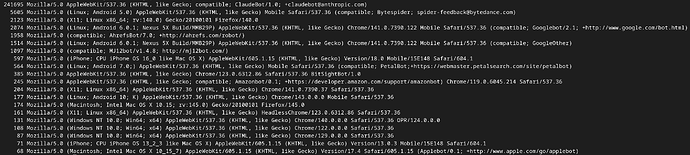

The screenshot below shows an extract of my web server log one month ago: the user agents are ordered by the number of requests they sent to one of my sites during a couple of hours. Your IT team should be able to generate the same kind of output.

You see that ClaudeBot sends over 40’000 times more requests than the second bot (Bytespider) and over 100’000 times more than Googlebot.

To get out of this hell, I edited my .htaccess and added the following lines:

SetEnvIfNoCase User-Agent “ClaudeBot” BadBot

Order Allow,Deny

Deny from env=BadBot

Allow from all

Each request from ClaudeBot gets now a “403 Forbidden” response and my website has regained peace and serenity.

You may find some other examples using different syntaxes, I hope you find a way to identify and solve the problem.